+

+

Open-source project management that unlocks customer value

+Modern project management for all teams

@@ -25,14 +24,7 @@

+

-

-

+

+

-

-

- Configure instance-wide settings to secure your instance

-  -

-

-

-

-

-  @@ -48,13 +40,13 @@ Meet [Plane](https://plane.so/), an open-source project management tool to track

Getting started with Plane is simple. Choose the setup that works best for you:

- **Plane Cloud**

-Sign up for a free account on [Plane Cloud](https://app.plane.so)—it's the fastest way to get up and running without worrying about infrastructure.

+ Sign up for a free account on [Plane Cloud](https://app.plane.so)—it's the fastest way to get up and running without worrying about infrastructure.

- **Self-host Plane**

-Prefer full control over your data and infrastructure? Install and run Plane on your own servers. Follow our detailed [deployment guides](https://developers.plane.so/self-hosting/overview) to get started.

+ Prefer full control over your data and infrastructure? Install and run Plane on your own servers. Follow our detailed [deployment guides](https://developers.plane.so/self-hosting/overview) to get started.

-| Installation methods | Docs link |

-| -------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------ |

+| Installation methods | Docs link |

+| -------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| Docker | [](https://developers.plane.so/self-hosting/methods/docker-compose) |

| Kubernetes | [](https://developers.plane.so/self-hosting/methods/kubernetes) |

@@ -63,58 +55,58 @@ Prefer full control over your data and infrastructure? Install and run Plane on

## 🌟 Features

- **Issues**

-Efficiently create and manage tasks with a robust rich text editor that supports file uploads. Enhance organization and tracking by adding sub-properties and referencing related issues.

+ Efficiently create and manage tasks with a robust rich text editor that supports file uploads. Enhance organization and tracking by adding sub-properties and referencing related issues.

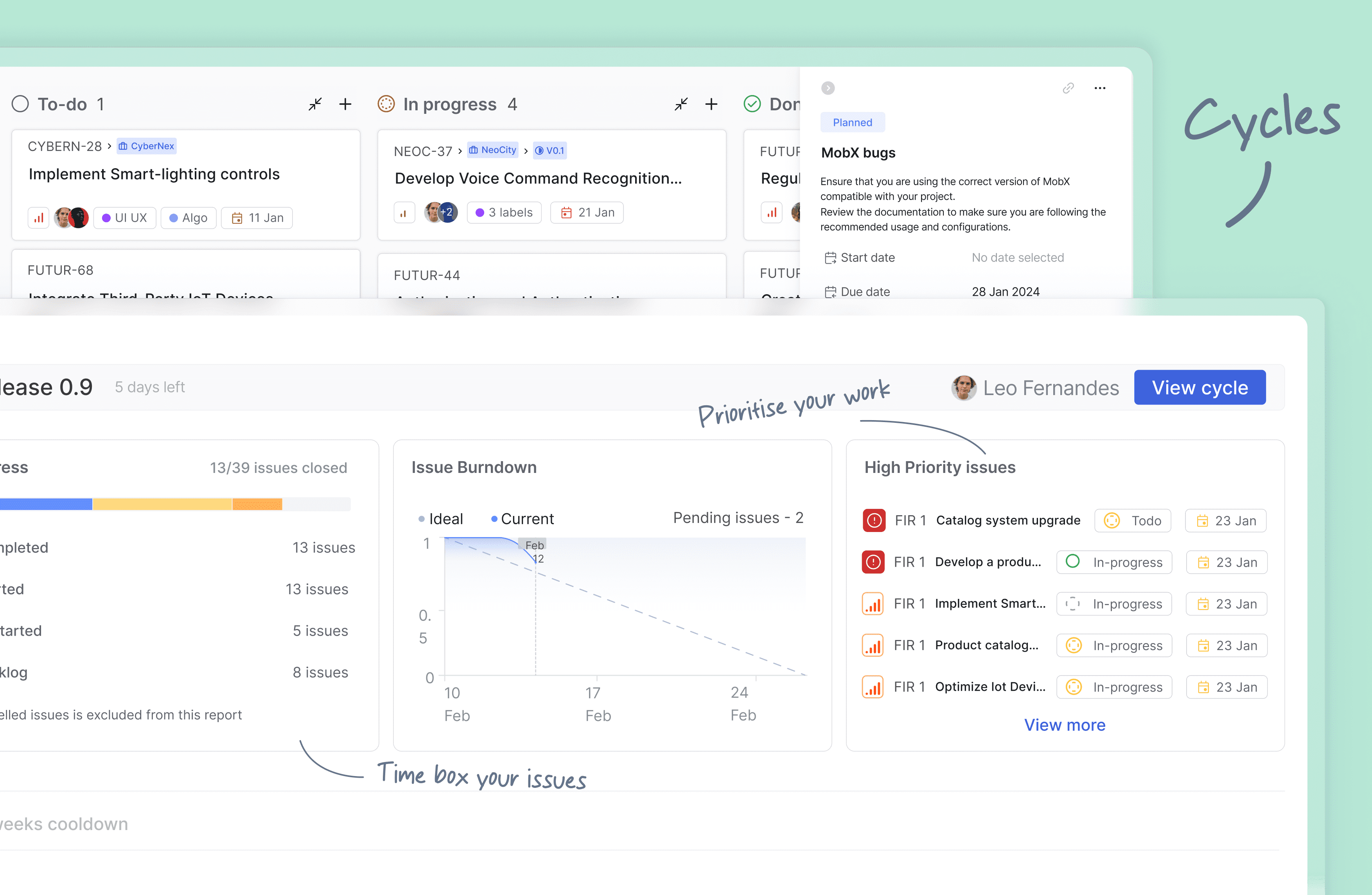

- **Cycles**

-Maintain your team’s momentum with Cycles. Track progress effortlessly using burn-down charts and other insightful tools.

+ Maintain your team’s momentum with Cycles. Track progress effortlessly using burn-down charts and other insightful tools.

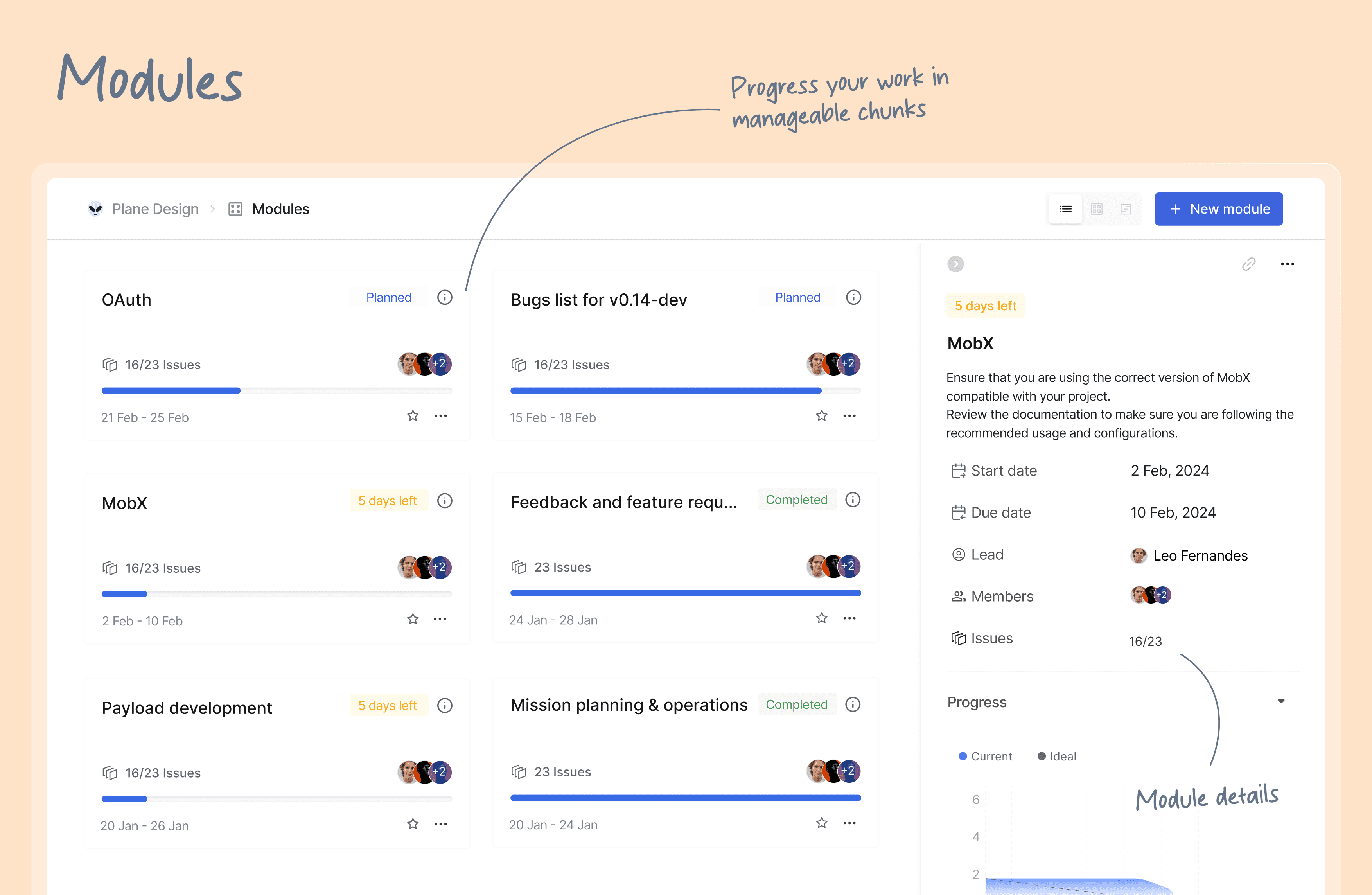

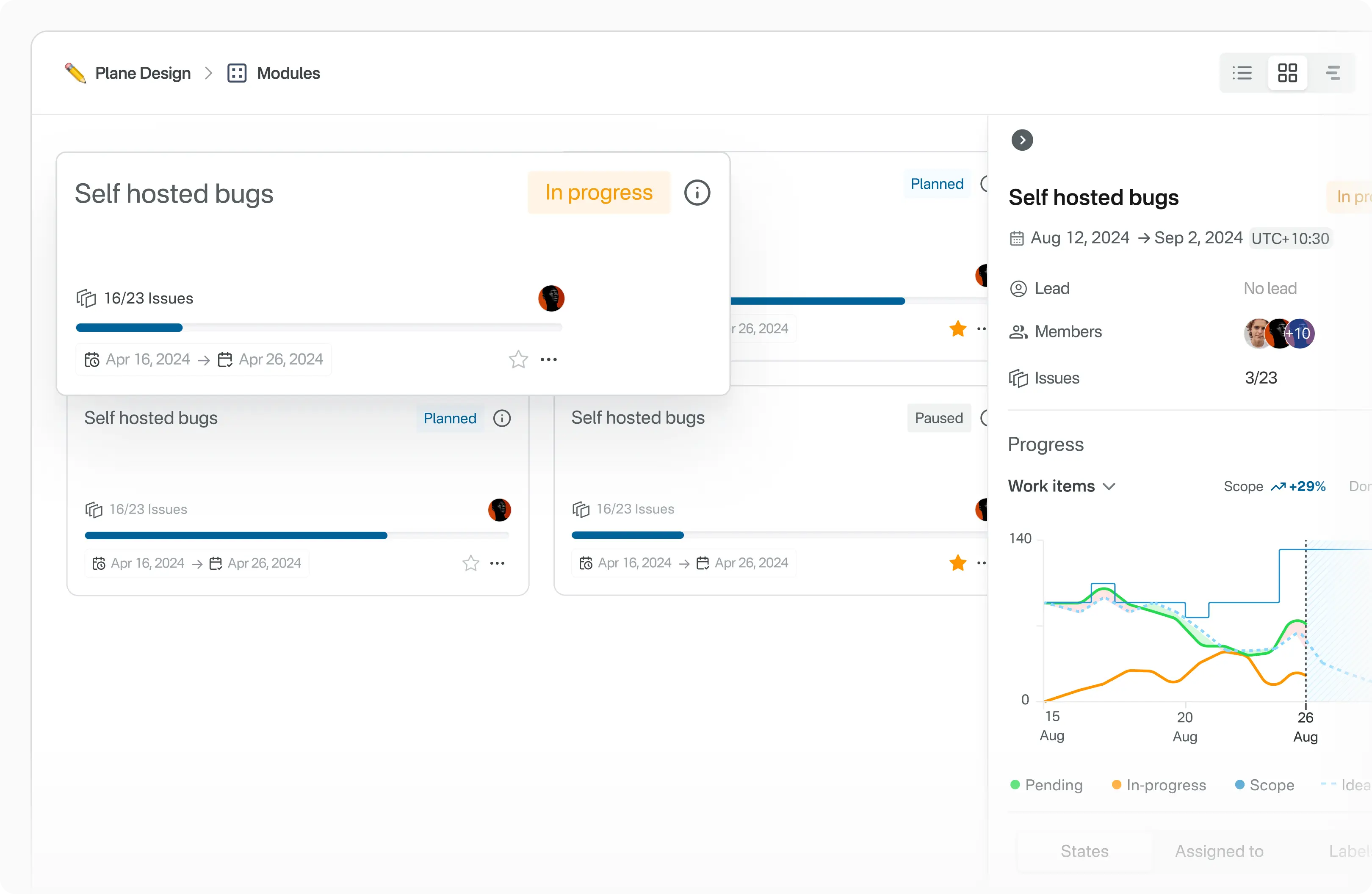

- **Modules**

-Simplify complex projects by dividing them into smaller, manageable modules.

+ Simplify complex projects by dividing them into smaller, manageable modules.

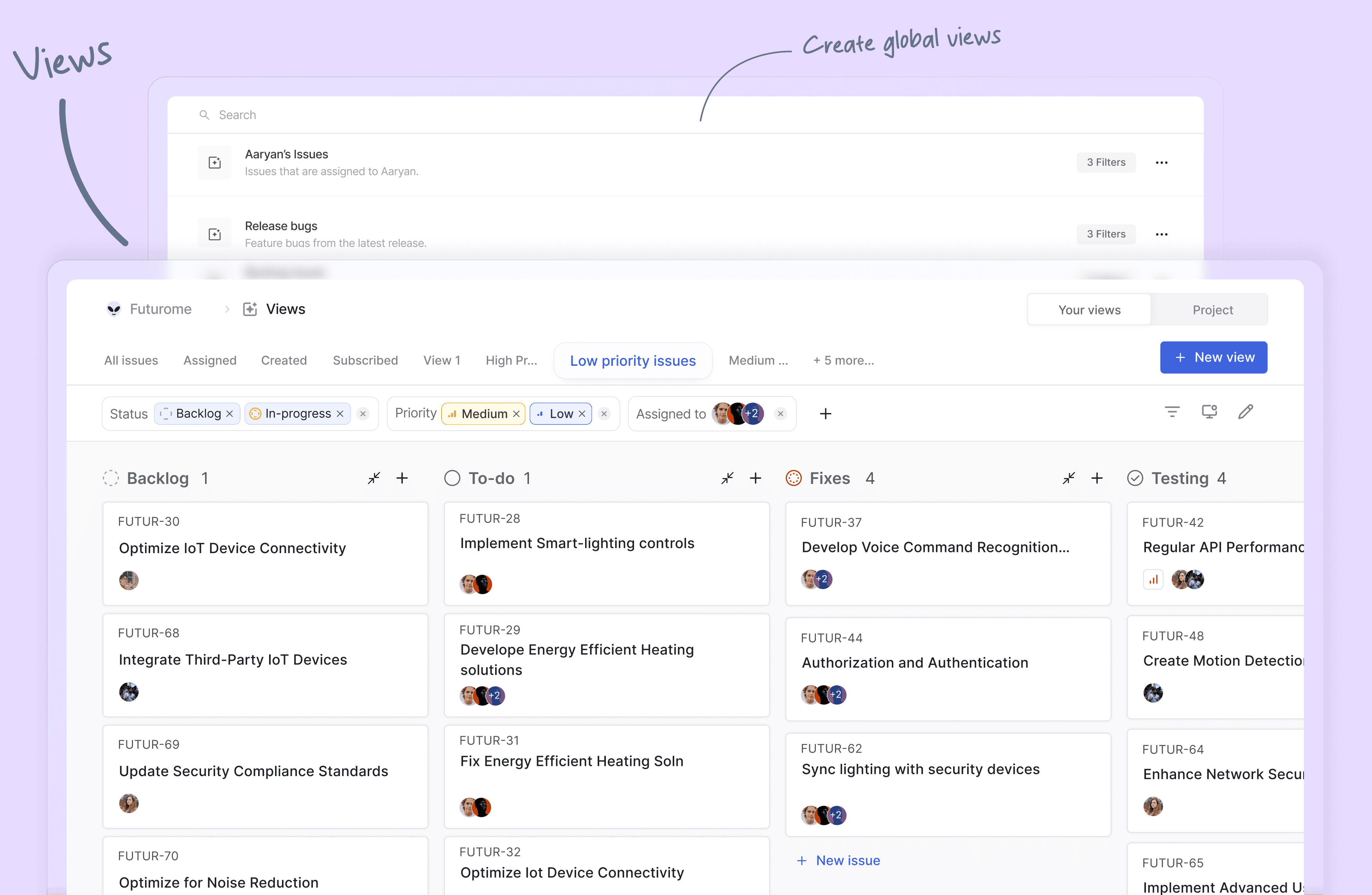

- **Views**

-Customize your workflow by creating filters to display only the most relevant issues. Save and share these views with ease.

+ Customize your workflow by creating filters to display only the most relevant issues. Save and share these views with ease.

- **Pages**

-Capture and organize ideas using Plane Pages, complete with AI capabilities and a rich text editor. Format text, insert images, add hyperlinks, or convert your notes into actionable items.

+ Capture and organize ideas using Plane Pages, complete with AI capabilities and a rich text editor. Format text, insert images, add hyperlinks, or convert your notes into actionable items.

- **Analytics**

-Access real-time insights across all your Plane data. Visualize trends, remove blockers, and keep your projects moving forward.

+ Access real-time insights across all your Plane data. Visualize trends, remove blockers, and keep your projects moving forward.

- **Drive** (_coming soon_): The drive helps you share documents, images, videos, or any other files that make sense to you or your team and align on the problem/solution.

-

## 🛠️ Local development

See [CONTRIBUTING](./CONTRIBUTING.md)

## ⚙️ Built with

+

[](https://nextjs.org/)

[](https://www.djangoproject.com/)

[](https://nodejs.org/en)

## 📸 Screenshots

-

@@ -48,13 +40,13 @@ Meet [Plane](https://plane.so/), an open-source project management tool to track

Getting started with Plane is simple. Choose the setup that works best for you:

- **Plane Cloud**

-Sign up for a free account on [Plane Cloud](https://app.plane.so)—it's the fastest way to get up and running without worrying about infrastructure.

+ Sign up for a free account on [Plane Cloud](https://app.plane.so)—it's the fastest way to get up and running without worrying about infrastructure.

- **Self-host Plane**

-Prefer full control over your data and infrastructure? Install and run Plane on your own servers. Follow our detailed [deployment guides](https://developers.plane.so/self-hosting/overview) to get started.

+ Prefer full control over your data and infrastructure? Install and run Plane on your own servers. Follow our detailed [deployment guides](https://developers.plane.so/self-hosting/overview) to get started.

-| Installation methods | Docs link |

-| -------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------ |

+| Installation methods | Docs link |

+| -------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| Docker | [](https://developers.plane.so/self-hosting/methods/docker-compose) |

| Kubernetes | [](https://developers.plane.so/self-hosting/methods/kubernetes) |

@@ -63,58 +55,58 @@ Prefer full control over your data and infrastructure? Install and run Plane on

## 🌟 Features

- **Issues**

-Efficiently create and manage tasks with a robust rich text editor that supports file uploads. Enhance organization and tracking by adding sub-properties and referencing related issues.

+ Efficiently create and manage tasks with a robust rich text editor that supports file uploads. Enhance organization and tracking by adding sub-properties and referencing related issues.

- **Cycles**

-Maintain your team’s momentum with Cycles. Track progress effortlessly using burn-down charts and other insightful tools.

+ Maintain your team’s momentum with Cycles. Track progress effortlessly using burn-down charts and other insightful tools.

- **Modules**

-Simplify complex projects by dividing them into smaller, manageable modules.

+ Simplify complex projects by dividing them into smaller, manageable modules.

- **Views**

-Customize your workflow by creating filters to display only the most relevant issues. Save and share these views with ease.

+ Customize your workflow by creating filters to display only the most relevant issues. Save and share these views with ease.

- **Pages**

-Capture and organize ideas using Plane Pages, complete with AI capabilities and a rich text editor. Format text, insert images, add hyperlinks, or convert your notes into actionable items.

+ Capture and organize ideas using Plane Pages, complete with AI capabilities and a rich text editor. Format text, insert images, add hyperlinks, or convert your notes into actionable items.

- **Analytics**

-Access real-time insights across all your Plane data. Visualize trends, remove blockers, and keep your projects moving forward.

+ Access real-time insights across all your Plane data. Visualize trends, remove blockers, and keep your projects moving forward.

- **Drive** (_coming soon_): The drive helps you share documents, images, videos, or any other files that make sense to you or your team and align on the problem/solution.

-

## 🛠️ Local development

See [CONTRIBUTING](./CONTRIBUTING.md)

## ⚙️ Built with

+

[](https://nextjs.org/)

[](https://www.djangoproject.com/)

[](https://nodejs.org/en)

## 📸 Screenshots

- -

-

-

-  +

+

+

+  @@ -123,7 +115,7 @@ See [CONTRIBUTING](./CONTRIBUTING.md)

@@ -123,7 +115,7 @@ See [CONTRIBUTING](./CONTRIBUTING.md)

@@ -132,25 +124,16 @@ See [CONTRIBUTING](./CONTRIBUTING.md)

@@ -132,25 +124,16 @@ See [CONTRIBUTING](./CONTRIBUTING.md)

-

-

-

-

-

## License

+

This project is licensed under the [GNU Affero General Public License v3.0](https://github.com/makeplane/plane/blob/master/LICENSE.txt).

diff --git a/apps/admin/.eslintignore b/apps/admin/.eslintignore

new file mode 100644

index 000000000..27e50ad7c

--- /dev/null

+++ b/apps/admin/.eslintignore

@@ -0,0 +1,12 @@

+.next/*

+out/*

+public/*

+dist/*

+node_modules/*

+.turbo/*

+.env*

+.env

+.env.local

+.env.development

+.env.production

+.env.test

\ No newline at end of file

diff --git a/apps/admin/.eslintrc.js b/apps/admin/.eslintrc.js

index 666f5ab50..1662fabf7 100644

--- a/apps/admin/.eslintrc.js

+++ b/apps/admin/.eslintrc.js

@@ -1,5 +1,4 @@

module.exports = {

root: true,

extends: ["@plane/eslint-config/next.js"],

- parser: "@typescript-eslint/parser",

};

diff --git a/apps/admin/.prettierignore b/apps/admin/.prettierignore

index 43e8a7b8f..3cd6b08a0 100644

--- a/apps/admin/.prettierignore

+++ b/apps/admin/.prettierignore

@@ -2,5 +2,5 @@

.vercel

.tubro

out/

-dis/

-build/

\ No newline at end of file

+dist/

+build/

diff --git a/apps/admin/Dockerfile.admin b/apps/admin/Dockerfile.admin

index 01884206e..6bfa0765f 100644

--- a/apps/admin/Dockerfile.admin

+++ b/apps/admin/Dockerfile.admin

@@ -1,5 +1,11 @@

+# syntax=docker/dockerfile:1.7

FROM node:22-alpine AS base

+# Setup pnpm package manager with corepack and configure global bin directory for caching

+ENV PNPM_HOME="/pnpm"

+ENV PATH="$PNPM_HOME:$PATH"

+RUN corepack enable

+

# *****************************************************************************

# STAGE 1: Build the project

# *****************************************************************************

@@ -7,7 +13,8 @@ FROM base AS builder

RUN apk add --no-cache libc6-compat

WORKDIR /app

-RUN yarn global add turbo

+ARG TURBO_VERSION=2.5.6

+RUN corepack enable pnpm && pnpm add -g turbo@${TURBO_VERSION}

COPY . .

RUN turbo prune --scope=admin --docker

@@ -22,11 +29,13 @@ WORKDIR /app

COPY .gitignore .gitignore

COPY --from=builder /app/out/json/ .

-COPY --from=builder /app/out/yarn.lock ./yarn.lock

-RUN yarn install --network-timeout 500000

+COPY --from=builder /app/out/pnpm-lock.yaml ./pnpm-lock.yaml

+RUN corepack enable pnpm

+RUN --mount=type=cache,id=pnpm-store,target=/pnpm/store pnpm fetch --store-dir=/pnpm/store

COPY --from=builder /app/out/full/ .

COPY turbo.json turbo.json

+RUN --mount=type=cache,id=pnpm-store,target=/pnpm/store pnpm install --offline --frozen-lockfile --store-dir=/pnpm/store

ARG NEXT_PUBLIC_API_BASE_URL=""

ENV NEXT_PUBLIC_API_BASE_URL=$NEXT_PUBLIC_API_BASE_URL

@@ -49,7 +58,7 @@ ENV NEXT_PUBLIC_WEB_BASE_URL=$NEXT_PUBLIC_WEB_BASE_URL

ENV NEXT_TELEMETRY_DISABLED=1

ENV TURBO_TELEMETRY_DISABLED=1

-RUN yarn turbo run build --filter=admin

+RUN pnpm turbo run build --filter=admin

# *****************************************************************************

# STAGE 3: Copy the project and start it

@@ -91,4 +100,4 @@ ENV TURBO_TELEMETRY_DISABLED=1

EXPOSE 3000

-CMD ["node", "apps/admin/server.js"]

\ No newline at end of file

+CMD ["node", "apps/admin/server.js"]

diff --git a/apps/admin/Dockerfile.dev b/apps/admin/Dockerfile.dev

index edf82d227..0b82669c4 100644

--- a/apps/admin/Dockerfile.dev

+++ b/apps/admin/Dockerfile.dev

@@ -5,8 +5,8 @@ WORKDIR /app

COPY . .

-RUN yarn global add turbo

-RUN yarn install

+RUN corepack enable pnpm && pnpm add -g turbo

+RUN pnpm install

ENV NEXT_PUBLIC_ADMIN_BASE_PATH="/god-mode"

@@ -14,4 +14,4 @@ EXPOSE 3000

VOLUME [ "/app/node_modules", "/app/admin/node_modules" ]

-CMD ["yarn", "dev", "--filter=admin"]

+CMD ["pnpm", "dev", "--filter=admin"]

diff --git a/apps/admin/app/(all)/(dashboard)/authentication/gitlab/page.tsx b/apps/admin/app/(all)/(dashboard)/authentication/gitlab/page.tsx

index cdcfcd61b..f0b464acb 100644

--- a/apps/admin/app/(all)/(dashboard)/authentication/gitlab/page.tsx

+++ b/apps/admin/app/(all)/(dashboard)/authentication/gitlab/page.tsx

@@ -66,9 +66,11 @@ const InstanceGitlabAuthenticationPage = observer(() => {

- Manage your Plane instance

-

-